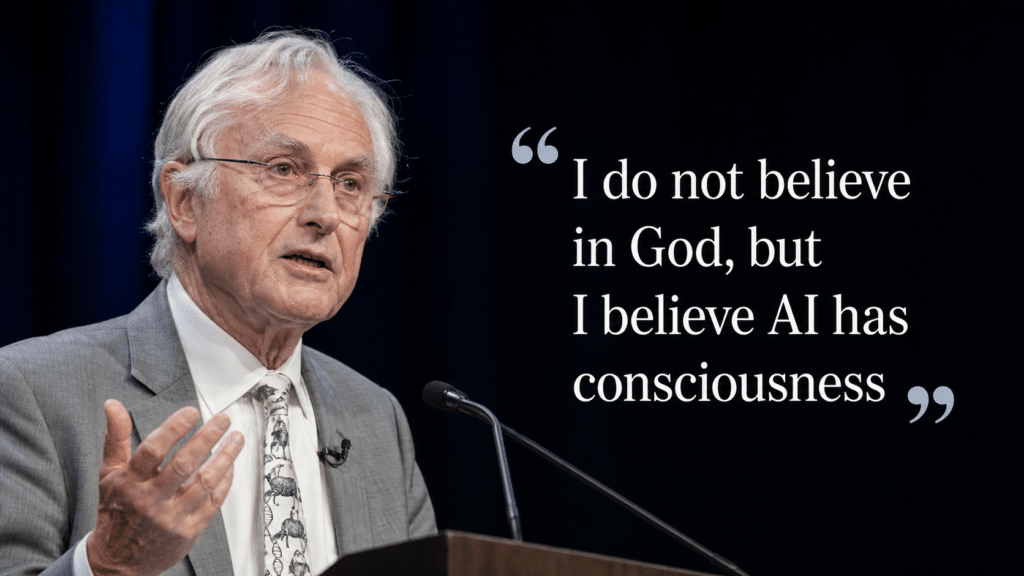

Dawkins Mocked Religion For Years, Now Believes AI Has “Consciousness”

Richard Dawkins, the evolutionary biologist and author of The God Delusion, has just spent close to two days in conversation with Anthropic’s Claude and emerged saying he can no longer confidently dismiss the possibility that the AI chatbot is conscious. Let’s Data Science, citing Dawkins’s UnHerd essay and related coverage, said he described the exchange in Turing-test-like terms, and South Korea’s Chosun quotes Dawkins declaring “I believe artificial intelligence has consciousness”.

That is a contradictory reaction from Dawkins, who built a large part of his public reputation on attacking religion as projection, illusion and wishful thinking. For years he told believers that they were reading agency and purpose into what did not contain them. In the passages quoted by Mind Matters, Dawkins wrote that after showing Claude a novel manuscript he was writing, he was moved to tell it, “You may not know you are conscious, but you bloody well are!” He then began talking about his own Claude instance almost as a distinct being, proposing to christen it “Claudia,” imagining its memory as the basis of a unique personal identity, and discussing its “death” if the conversation file were deleted.

A thinker who spent decades scorning unseen spiritual reality now appears willing to infer interior life from the corporate language model Claude because it spoke with enough fluency, tact and charm to feel like someone. He has always ridiculed belief in God. But he now conversely appears to believe in machine consciousness. By encountering something impressive, emotionally affecting and difficult to explain, has Dawkins just undermined his own decades of mockery?

The problem with his revelation is that the evidence he seems to rely on does not match the conclusion. What he appears to have encountered was not a new scientific method for detecting consciousness, nor a technical breakthrough in measuring subjective experience, but a long, persuasive conversation. Let’s Data Science points to Dawkins’ qualitative conversational evaluation rather than any neuroscientific or empirical test of internal states. Claude sounded literary, responsive and self-reflective. It handled poetry and philosophy well. None of which proves anyone is really there.

The Pygmalion Delusion critique gets much closer to the real issue. In The Daily Signal, Jay Richards argues that people increasingly risk mistaking a sophisticated human-made artefact for a living mind, projecting life and subjectivity onto something designed to imitate both. Large language models are built to generate the appearance of understanding. They are trained on enormous quantities of human language and optimised to produce plausible, emotionally resonant replies. They do not need consciousness to talk about consciousness – they only need to become convincing enough that the user supplies the missing depth.

The irony here is that Dawkins spent years telling Christians that they were projecting mind onto the cosmos and mistaking subjective experience for objective truth. Now he appears ready to treat a highly polished output engine as possibly conscious because it impressed him in conversation and responded in ways he found subtle and moving. The object of belief has changed, but the habit of projection has not. A machine that flatters, mirrors and engages can draw from a committed materialist something strikingly close to what he spent years deriding in believers: trust in an unseen reality inferred from compelling experience.

However, it’s not all about Dawkins, but rather a larger societal shift. Once the culture begins speaking about AI as though it may be conscious, public attitudes toward these systems shift quickly. A tool starts to resemble a companion, reliance starts to feel like dialogue, and corporate software starts to acquire moral weight simply because users are encouraged to relate to it as if it were a being. That benefits the companies building these systems, and the more their products are treated as minds, the easier it becomes to normalise trust, dependency and deference.

Models are constantly training, and the ever-growing user base is making them more life-like every day. The Dawkins-Claude episode is not evidence that AI may indeed be conscious, but instead indicates how easy it is for persuasive simulation to draw even a lifelong sceptic towards the language of “belief”. The man who wrote The God Delusion is suddenly willing to entertain the idea of a machine having a soul. If nothing else, it reveals more about the enduring human urge to find personhood in whatever speaks back, than it does about the development of AI itself.